Proper study guides for 70-775 Perform Data Engineering on Microsoft Azure HDInsight (beta) certified begins with 70-775 Dumps Questions preparation products which designed to deliver the 70-775 Exam Dumps by making you pass the 70-775 test at your first time. Try the free 70-775 Dumps right now.

Microsoft 70-775 Free Dumps Questions Online, Read and Test Now.

NEW QUESTION 1

Note: This question is part of a series of questions that use the same or similar answer choices. An answer choice may be correct for more than one question in the series. Each question is independent of the other questions in this series. Information and details provided in a question apply only to that question.

You need to deploy an enterprise data warehouse that will support in-memory analytics. The data warehouse must support connections that use the Microsoft Hive ODBC Driver and Beeline. The data warehouse will be managed by using Apache Ambari only.

What should you do?

Answer: F

Explanation: References: https://docs.microsoft.com/en-us/azure/hdinsight/hdinsight-hadoop-useinteractive-hive

NEW QUESTION 2

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You need to deploy an HDInsight cluster that will provide in memory processing, interactive queries, and micro batch stream processing. The cluster has the following requirements:

• Uses Azure Data Lake Store as the primary storage

• Can be used by HDInsight applications. What should you do?

Answer: B

NEW QUESTION 3

You have an Apache Spark cluster in Azure HDInsight. You plan to join a large table and a lookup table.

You need to minimize data transfers during the join operation. What should you do?

Answer: B

NEW QUESTION 4

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are implementing a batch processing solution by using Azure HDlnsight. You have a table that contains sales data.

You plan to implement a query that will return the number of orders by zip code.

You need to minimize the execution time of the queries and to maximize the compression level of the resulting data.

What should you do?

Answer: B

NEW QUESTION 5

HOTSPOT

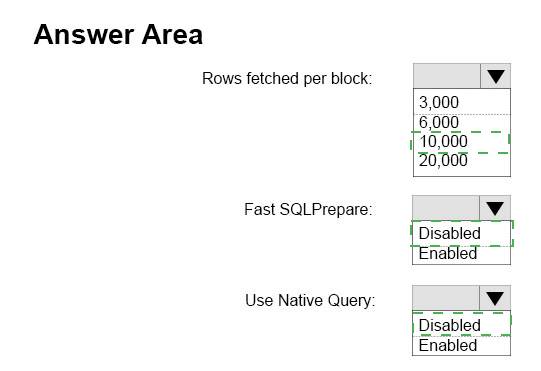

You install the Microsoft Hive ODBC Driver on a computer that runs Windows 10 and has the 64-bit version of Microsoft Office 2021 installed.

You deploy a new Apache Interactive Hive cluster in Azure HDInsight. The cluster is hosted at myHDICluster.azurehdinsignt.net and contains a Hive table named hivesampletable that has 200,000 rows.

You plan to use HiveQL exclusively for the queries. The queries will return from 6,000 to 10,000 rows 90 percent of the time.

You need to configure a data source to ensure that you can use Microsoft Excel to access the data. The solution must ensure that the Hive queries execute as quickly as possible.

How should you configure the Advanced Options from the Microsoft Hive ODBC Driver DSN Setup dialog box? To answer select the appropriate options in the answer area.

NOTE:

Each correct selection is worth one point.

Answer:

Explanation: References: https://docs.microsoft.com/en-us/azure/hdinsight/hdinsight-connect-excel-hiveodbc-driver

NEW QUESTION 6

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are implementing a batch processing solution by using Azure HDlnsight. You have a data stored in Azure.

You need to ensure that you can access the data by using Azure Active Directory (Azure AD) identities.

What should you do?

Answer: G

Explanation: References: https://docs.microsoft.com/en-us/azure/data-factory/concepts-datasets-linkedservices

NEW QUESTION 7

You plan to copy data from Azure Blob storage to an Azure SQL database by using Azure Data factory. Which file formats can you use?

Answer: D

Explanation: References: https://docs.microsoft.com/en-us/azure/data-factory/supported-file-formatsand-compression-codecs

NEW QUESTION 8

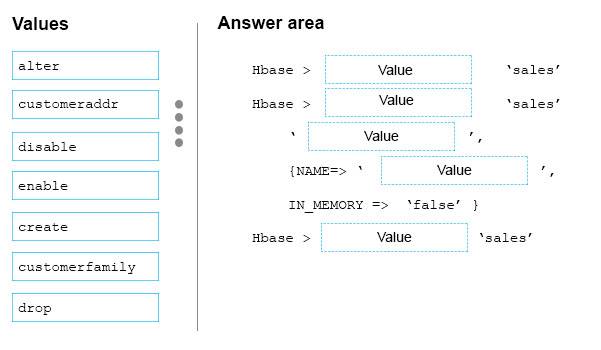

DRAG DROP

You have an Apache HBase cluster in Azure HDInsight. The cluster has a table named sales that contains a column family named customerfamily.

You need to add a new column family named customeraddr to the sales table.

How should you complete the command? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once or not at all.

Answer:

Explanation:

Hbase > disable 'sales'

Hbase > alter 'sales'

‘customerfamily’,

{NAME => 'customeraddr',

IN_MEMORY => false},

Hbase > enable 'sales'

NEW QUESTION 9

You have an Apache Hadoop cluster in Azure HDInsiqht that has a head node and three data nodes. You have a MapReduce job.

You receive a notification that a data node failed.

You need to identity which component caused the failure. Which tool should you use?

Answer: C

NEW QUESTION 10

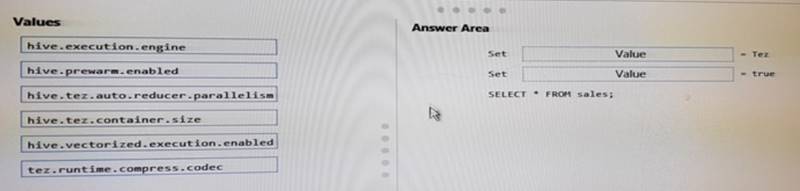

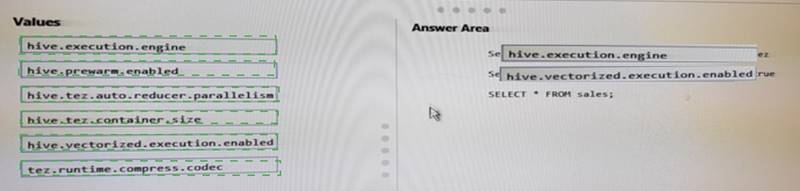

DRAG DROP

You have an Apache Hive cluster in Azure HDInsight. You need to tune a Hive query to meet the following requirements:

• Use the Tez engine.

• Process 1,024 rows in a batch.

How should you complete this query? To answer, drag the appropriate values to the correct targets.

Answer:

Explanation:

NEW QUESTION 11

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

Start of Repeated Scenario:

You are planning a big data infrastructure by using an Apache Spark Cluster in Azure HDInsight. The cluster has 24 processor cores and 512 GB of memory.

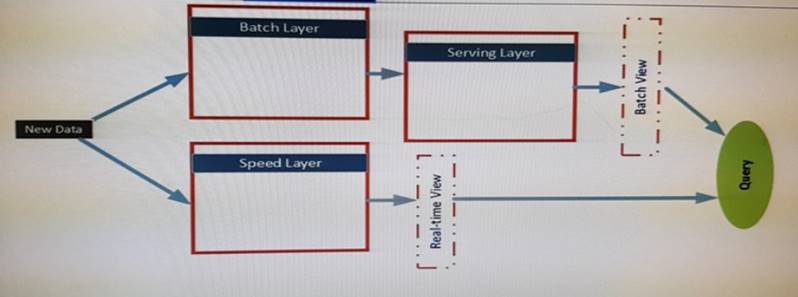

The Architecture of the infrastructure is shown in the exhibit:

The architecture will be used by the following users:

* Support analysts who run applications that will use REST to submit Spark jobs.

* Business analysts who use JDBC and ODBC client applications from a real-time view. The business analysts run monitoring quires to access aggregate result for 15 minutes. The result will be referenced by subsequent quires.

* Data analysts who publish notebooks drawn from batch layer, serving layer and speed layer queries. All of the notebooks must support native interpreters for data sources that are bath processed. The serving layer queries are written in Apache Hive and must support multiple sessions. Unique GUIDs are used across the data sources, which allow the data analysts to use Spark SQL.

The data sources in the batch layer share a common storage container. The Following data sources are used:

* Hive for sales data

* Apache HBase for operations data

* HBase for logistics data by suing a single region server.

End of Repeated scenario.

The business analysts report that they experience performance issues when they run the monitoring queries.

You troubleshoot the performance issues and discover that the intermediate tables generated when the analysts run the queries cause pressure for the Java Virtual Machine (JVM) garbage collection per job.

Which configuration settings should you modify to alleviate the performance issues?

Answer: D

NEW QUESTION 12

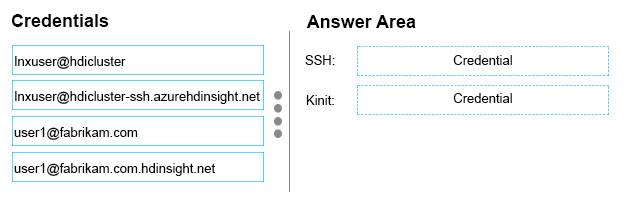

DRAG DROP

You have a domain joined Apache Hadoop cluster in Azure HDInsight named hdicluster. The Linux account for hdicluster is named Inxuser.

Your Active Directory account is names user1@fabrikam.com. You need to run Hadoop commands from an SSH session.

Which credentials should you use? To answer, drag the appropriate credentials to the correct commands. Each credential may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

Answer:

Explanation: References: https://docs.microsoft.com/en-us/azure/hdinsight/hdinsight-hadoop-linux-usessh-unix

NEW QUESTION 13

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

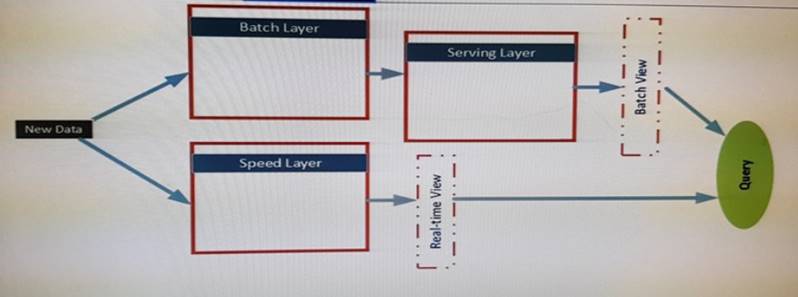

Start of Repeated Scenario:

You are planning a big data infrastructure by using an Apache Spark Cluster in Azure HDInsight. The cluster has 24 processor cores and 512 GB of memory.

The Architecture of the infrastructure is shown in the exhibit:

The architecture will be used by the following users:

* Support analysts who run applications that will use REST to submit Spark jobs.

* Business analysts who use JDBC and ODBC client applications from a real-time view.

The business analysts run monitoring quires to access aggregate result for 15 minutes. The result will be referenced by subsequent quires.

* Data analysts who publish notebooks drawn from batch layer, serving layer and speed layer queries. All of the notebooks must support native interpreters for data sources that are bath processed. The serving layer queries are written in Apache Hive and must support multiple sessions. Unique GUIDs are used across the data sources, which allow the data analysts to use Spark SQL.

The data sources in the batch layer share a common storage container. The Following data sources are used:

* Hive for sales data

* Apache HBase for operations data

* HBase for logistics data by suing a single region server.

End of Repeated scenario.

You need to ensure that the analysts can query the logistics data by using JDBC APIs and SQL APIs. Which technology should you implement?

Answer: D

NEW QUESTION 14

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are implementing a batch processing solution by using Azure HDlnsight.

You need to integrate Apache Sqoop data and to chain complex jobs. The data and jobs will implement MapReduce. What should you do?

Answer: F

NEW QUESTION 15

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this sections, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are building a security tracking solution in Apache Kafka to parse security logs. The security logs record an entry each time a user attempts to access an application. Each log entry contains the IP address used to make the attempt and the country from which the attempt originated.

You need to receive notifications when an IP address from outside of the United States is used to access the application.

Solution: Create a consumer and a broker. Create a file import process to send messages.

Run the producer.

Does this meet the goal?

Answer: B

NEW QUESTION 16

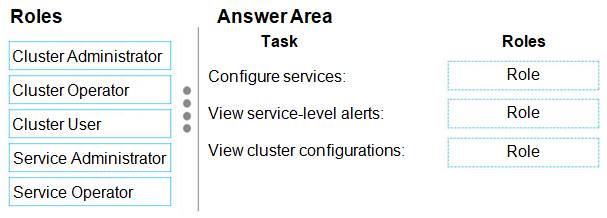

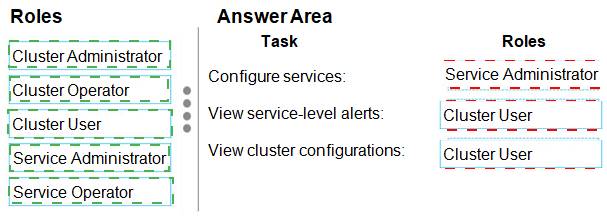

DRAG DROP

You have a domain-joined Azure HDInsight cluster. You plan to assign permissions to several support staff.

You need to assign roles to the staff so that they can perform specific tasks.

The solution must use the principle of least privilege.

Which role should you assign for each task? To answer, drag the appropriate roles to the correct tasks. Each role may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Answer:

Explanation:

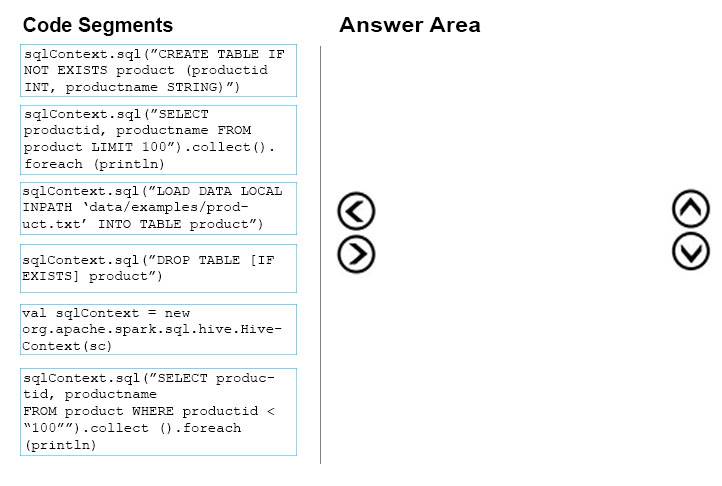

NEW QUESTION 17

DRAG DROP

You have a text file named Data/examples/product.txt that contains product information.

You need to create a new Apache Hive table, import the product information to the table, and then read the top 100 rows of the table.

Which four code segments should you use in sequence? To answer, move the appropriate code segments from the list of code segments to the answer area and arrange them in the correct order.

Answer:

Explanation:

val sqlContext = new org.apache.spark.sql.hive.HiveContext(sc)

sqlContext.sql(“CREATE TABLE IF NOT EXISTS productid INT, productname STRING)”

sqlContext.sql("LOAD DATA LOCAL INPATH ‘Data/examples/product.txt’ INTO TABLE

product")

sqlContext.sql("SELECT productid, productname FROM product LIMIT 100").collect().foreach (println)

References: https://www.tutorialspoint.com/spark_sql/spark_sql_hive_tables.htm

NEW QUESTION 18

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are building a security tracking solution in Apache Kafka to parse Security logs. The Security logs record an entry each time a user attempts to access an application. Each log entry contains the IP address used to make the attempt and the country from which the attempt originated.

You need to receive notifications when an IP address from outside of the United States is used to access the application.

Solution: Create new topics. Create a file import process to send messages. Start the consumer and run the producer.

Does this meet the goal?

Answer: A

Recommend!! Get the Full 70-775 dumps in VCE and PDF From Surepassexam, Welcome to Download: https://www.surepassexam.com/70-775-exam-dumps.html (New 61 Q&As Version)